- Manual SEO reporting costs 10-client agencies $2,250–$5,000 monthly and produces data that's stale on arrival.

- Real-time domain snapshots fetch indexability, on-page, link, and load signals on demand instead of via scheduled crawls.

- Actionable SEO scores weight issues by ranking impact and map directly to specific developer fixes, not vanity numbers.

- Transparent, live reporting correlates with 40–60% longer client relationships and addresses the leading cause of SEO churn.

- Integrated SEO platforms are replacing fragmented tool stacks because batch-era architectures can't deliver true live data.

The average SEO agency spends three to five hours per client per month building reports by hand, then ships those reports describing a website that, in many cases, no longer exists in the form being described. Manual reporting costs a ten-client agency between $2,250 and $5,000 monthly on reporting mechanics rather than campaign work, and the output is already aging the moment the PDF lands in a client’s inbox. That is the quiet scandal underneath modern SEO reporting: not that the data is wrong, but that it is old.

Real-time domain snapshots are the structural fix. They replace the scheduled crawl with a live capture, and they replace the estimate with an answer.

- Legacy crawl tools introduce days of lag between data and decisions.

- Real-time domain snapshots capture indexability, on-page, and link signals live.

- An actionable SEO score must map to specific fixes, not vanity benchmarks.

- Transparent live data is now tied to measurably longer client retention.

- The SEO platform is replacing the standalone tool stack for a reason.

Why SEO Reporting Has a Freshness Problem

Most SEO data your agency presents was true yesterday, not today.

Traditional crawl-based SEO tools operate on schedules — weekly, biweekly, or monthly recrawls — and even the platforms that pull from Google’s own pipes inherit a built-in delay. Google Search Console’s regular performance data is updated with a 2–3 day delay, while only the Last 24 Hours tab is close to real-time. When Google’s data pipeline stutters, that lag balloons: a 2025 Search Engine Land report described weeks-long delays in Performance reports, with normal latency sitting between two and six hours after the fix.

Now stack that delay on top of weekly agency crawls and a monthly reporting cadence. The recommendation you make in a Tuesday strategy call may be based on a snapshot taken eleven days earlier — describing a site that has since pushed new code, lost backlinks, or quietly deindexed a template. Stale metrics produce confident, wrong recommendations.

What Is a Real-Time Domain Snapshot in SEO?

A real-time domain snapshot is a live capture of a domain’s technical and content state at the moment of request.

Instead of waiting for a scheduled crawler to come around again, the platform fetches the domain on demand and parses what it finds — right now. No batch queue. No overnight processing window. No “data will refresh by Friday.”

Contrast that with the traditional model. Batch data processing collects, queues, and recomputes on a fixed schedule, which means the score you see is always a reconstruction of a past state. Live domain analysis flips the dependency: the score is computed when you ask the question.

A useful snapshot captures the signals that actually move rankings:

- Site indexability signals: robots directives, canonical chains, status codes, noindex flags.

- On-page structure: titles, headings, schema, internal link graph.

- Backlink signals: referring domains visible at the moment of capture.

- Load behavior: TTFB, render-blocking patterns, Core Web Vitals proxies.

- Content state: word count, freshness markers, duplicate detection.

From Raw Signals to an Actionable SEO Score

Raw snapshot data is useless until it is synthesized into a score that tells someone what to do next.

An actionable SEO score is not a 0–100 vanity gauge. It is a weighted composite that maps directly to fixes — a noindex on a money page costs more points than a missing meta description, because the fix priority is not the same. A vanity score makes a client nod. An actionable score makes a developer open a ticket.

How agencies use the score in practice

- Triage: rank issues by score impact, not by gut feel or alphabetical plugin order.

- Scope: use the score delta to size retainers and justify hours.

- Set expectations: show clients what a realistic 30-day score lift looks like.

- Measure progress: rerun the snapshot after each sprint and show movement in hours, not weeks.

This is also why data freshness matters at the AI-search layer. In 2025, 76.4% of web pages cited in AI Overviews were updated within the last 30 days, meaning the score has to reflect current state to predict current visibility.

Replace Guesswork in Agency SEO Reporting

The shift from guesswork to live evidence changes the texture of every client conversation.

A guesswork workflow looks like this: the strategist exports last week’s crawl, cross-references a month-old rank tracker, eyeballs GSC, and writes a recommendation that begins with “we believe.” That phrase is doing a lot of work. It is hedging against the possibility that the underlying data is wrong.

Real-time data removes the hedge. When a snapshot was taken seconds ago, the strategist can say “your /pricing template is currently returning noindex” instead of “we suspect indexation issues.” That is a different sentence with a different commercial value.

And clients reward the difference. According to a recent agency survey, nearly half of all agencies cite lack of transparency and unclear reporting as the leading causes of client churn, while agencies using transparent reporting approaches see client relationships extend 40–60% longer than industry averages. Considering SEO carries roughly a 38% client churn rate driven largely by expectation mismatches, reporting freshness is not a UX feature — it is a retention lever.

The Emergence of the SEO Platform — and What It Changes

Standalone SEO tools are being absorbed into integrated platforms because agencies can no longer afford the seams between them.

The old stack — one crawler, one rank tracker, one backlink tool, one reporting layer — created lag at every handoff. The emergence of the SEO platform consolidates snapshot capture, scoring, and reporting in a single environment, which is the only architecture that can deliver live data end-to-end. Sage SEO is one example of this category, built around real-time domain snapshots as the underlying primitive rather than as a feature bolted onto a batch crawler.

| Capability | Legacy Crawl-Based Tools | Modern SEO Platform |

|---|---|---|

| Data capture | Scheduled crawls (weekly+) | Real-time domain snapshots |

| Score basis | Last batch processed | Live signal synthesis |

| Reporting lag | Days to weeks | Seconds |

| Client confidence | “We believe…” | “As of now…” |

Here is the contrarian read: “real-time” is becoming a marketing label that some tools cannot honor, because their core architecture is still a queue. The agencies that win in 2026 will not be the ones with the prettiest dashboards — they will be the ones whose underlying data layer was rebuilt for live capture.

Frequently Asked Questions

What is a real-time domain snapshot in SEO?

A real-time domain snapshot is an on-demand capture of a website’s current technical, content, and link signals, processed instantly into an actionable SEO score. It replaces the scheduled crawl model with a live-fetch architecture.

Why are most SEO reports outdated before they are sent?

Because they combine batch crawl data with Google Search Console feeds that already carry a 2–3 day delay, then sit in a manual review queue. By the time the PDF is delivered, the underlying site state has often changed.

How is an actionable SEO score different from a regular score?

An actionable score weights issues by their direct impact on rankings and maps each point loss to a specific fix. A regular score reports a number; an actionable score generates a prioritized work order.

How do real-time domain snapshots improve client retention?

They let agencies replace hedging language with verifiable current-state evidence, which directly addresses the transparency gap most clients cite when they churn. Live data turns reporting into a trust-building ritual rather than a defensive one.

The Bottom Line: Live Data Is the New Floor

Static, batch-processed SEO reporting had a long run, and it earned its place when crawling the web at speed was expensive. That constraint is gone. Any agency still defending a weekly-crawl, manually-assembled reporting model in 2026 is not being thorough — it is being slow. Real-time domain snapshots are not a premium add-on; they are the new baseline that every other piece of the reporting stack now has to keep up with.

Frequently Asked Questions

What is a real-time domain snapshot in SEO?

Why are most SEO reports outdated before they are sent?

How is an actionable SEO score different from a regular score?

How do real-time domain snapshots improve client retention?

What signals should a useful SEO snapshot capture?

How much manual reporting work do agencies currently absorb?

Share this

Related Posts

-

AI-First SEO: The Future of Search Engine Optimization

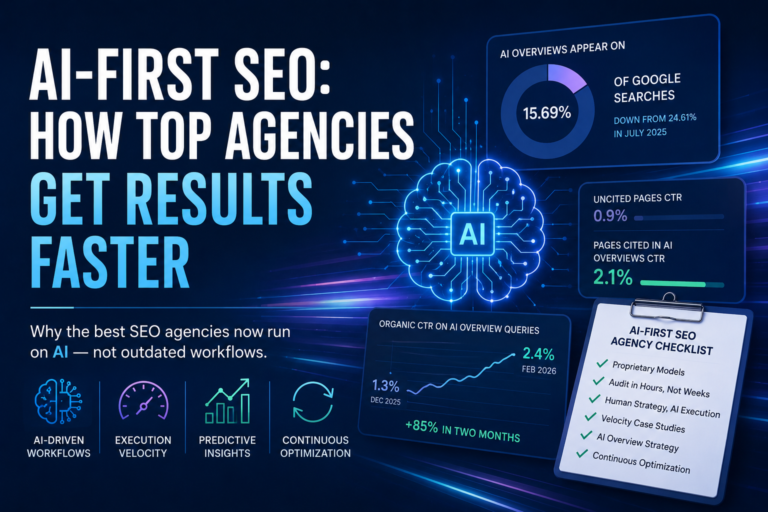

In November 2025, AI Overviews appeared on 15.69% of Google searches — down from a…

-

ContentOps: Streamlining Your SEO Workflow from Ideation to Publication

ContentOps: Streamlining Your SEO Workflow from Ideation to Publication Imagine a marketing team drowning in…

-

AI Content Writing Services: What Marketers Need to Know in 2024

AI content writing services promise speed, but winners in 2024 don’t outsource thinking to a…